By Emma Weber - AI Transformation Advisor and Author. Emma Weber has spent 23 years in behaviour change and learning transfer, helping organisations globally navigate the human side of AI transformation. Founder of Being Human in the Age of AI.

← All posts

Governance

Shadow AI: The Four Reasons It's Happening in Your Organisation - and What Each One Tells You

22 April 2026

·

7 min read

·

Emma Weber

About ten days ago, Trish Uhl and I wrapped up a webinar we'd both been looking forward to for a while. Trish had done some brilliant benchmarking work - taking our own investigations into what's happening with AI adoption here in Australia, and setting them against what the global trends are telling us. The conversation with our attendees was rich and honest, and a few things have stayed with me since.

One of them is the topic of Shadow AI.

(And if you missed the webinar and want the full picture - including what we're calling the two mountains of AI transformation, and why most organisations are still climbing the first one - just drop me a message and I'll happily send across the briefing.)

But I want to dig into Shadow AI specifically. Because I think it's sitting in the blind spot of most organisational AI strategies right now - and the stakes are getting higher.

What do we mean by Shadow AI?

Shadow AI refers to employees using AI tools that haven't been officially sanctioned by their organisation. It's happening everywhere. And the important thing to understand is: it's not happening because people are being reckless or careless. It's happening for deeply human reasons.

Before we get to the "why", it's worth sitting with the "how much." There's compelling research emerging on the cost of what Forrester are calling "shadow work" - the invisible, unofficial tasks that drain employee time and energy outside of their core role.

In a study of over 8,000 workers, 76% reported engaging in shadow work during their working hours, with 59% saying the volume is increasing year-on-year. The estimated financial cost globally is over $1.7 trillion annually.

Forrester Research

Now, shadow work and Shadow AI aren't the same thing. But they're deeply connected. AI tools are increasingly what people are reaching for to manage those exact kinds of tasks - and when organisations don't provide them officially, people find their own. The demand is there whether or not the governance has caught up.

The real crux of the Shadow AI problem is not that people are doing something wrong. It's that organisations haven't created the conditions that would make it unnecessary.

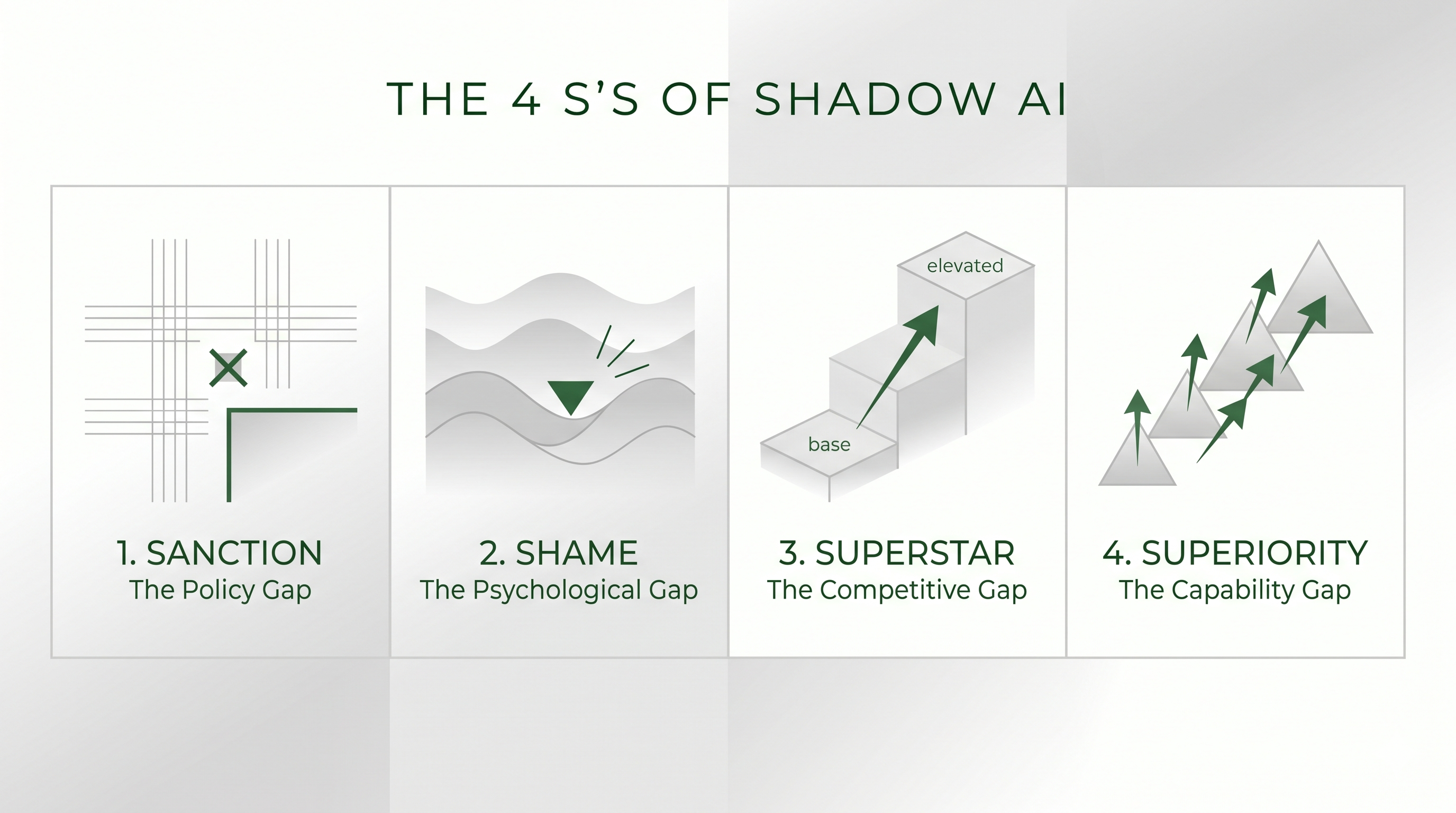

In our investigations and conversations with organisations, we've identified four distinct drivers. We call them the four S's.

The first S

Sanction - The Policy Gap

The first driver is, in some ways, the most straightforward to address. People are using Shadow AI because the organisation hasn't been clear. There are fuzzy boundaries around which tools are approved, what data can be shared with an AI model, and when AI is appropriate at all. In the absence of that clarity, people default to "easier to ask forgiveness than permission" - and quietly use the tools that work best for them.

This isn't a technology problem. It's a leadership and communication problem. And it's one that can be addressed relatively quickly when there's will to have the conversation openly.

The second S

Shame - The Psychological Gap

The second driver is more nuanced, and honestly, it's the one I find most interesting right now.

In some organisations, using AI is still quietly seen as cheating. There's a cultural undercurrent that says: if you needed AI to do that, it doesn't quite count. People feel like they're taking a shortcut, and they'd rather keep it in the shadows than risk being seen as less capable or less intelligent.

What's interesting - and what we're starting to see in our research - is that this dynamic is beginning to flip. The shame is starting to work the other way too. People who aren't engaging with AI are beginning to feel left behind. And that's its own kind of pressure.

Both versions create a climate where people hide what they're actually doing. Neither is healthy. What organisations genuinely need is a culture that supports open learning, honest experimentation, and real conversation about where AI helps and where it doesn't. And I know a thing or two about what it takes to build that kind of culture! It doesn't need to be complicated - just consistent. It needs to go way beyond the policy memo.

The third S

Superstar - The Competitive Gap

The third driver is perhaps the most strategically significant for leaders.

When Shadow AI is giving someone a real productivity advantage - helping them work faster, produce stronger outputs, finish hours earlier - they have a genuine incentive to keep it quiet. It's become part of their edge within the organisation. Sharing it would close the gap they've worked to create.

This means organisations can end up with hidden performance inequality they have no visibility over. Some people are quietly supercharged. Others aren't. And neither group is having an honest conversation about it. Getting these people out of the shadows will give you an organisational leg up - learn from what they've implemented.

The fourth S

Superiority - The Capability Gap

The fourth driver is the one that's moving fastest right now.

The AI tools people have access to in their personal lives - through their own accounts, their own subscriptions - are often significantly more capable than what their employer has officially approved. Enterprise versions, particularly in regulated sectors, often get stripped back in ways that limit their usefulness. So people find themselves doing work with a personal tool that the official one simply can't match. And the gap between the two, for many people, isn't narrowing.

So what does this mean for you?

If you're leading an organisation, the question isn't whether Shadow AI is happening. The chances are it is. The question is whether you understand what's actually driving it - and whether your response is going to address those root causes, or just add another layer of policy that creates compliance anxiety without changing anything on the ground.

Over the coming weeks, I'm going to go deeper on each of the four S's - because each one has a different shape, and needs a different kind of response.

In the meantime, I'd genuinely love to hear which of the four resonates most with what you're seeing in your own organisation. Is it the governance gap? The culture of shame? Something else entirely?

(Feel free to comment, or drop me a message if you'd prefer to have the conversation more quietly - no shame here!)

Ready to go further

Most organisations think they're further ahead than they are. Some are further ahead than they think. The Assessment tells you which.

Take the AI Transformation Readiness Assessment

Around 12 minutes. No login required. We'll send your results.

Frequently asked questions about shadow AI

What is shadow AI?

Shadow AI refers to employees using AI tools that haven't been officially sanctioned by their organisation. It is widespread across industries and sectors. Critically, it is not happening because people are being reckless or careless - it is happening for deeply human reasons, including unclear governance, cultural shame around AI use, competitive advantage, and gaps between enterprise and consumer AI capability.

Why do employees use shadow AI?

Emma Weber identifies four distinct drivers of shadow AI, which she calls the four S's. First, Sanction - organisations haven't been clear about which tools are approved or when AI use is appropriate, so people default to using what works. Second, Shame - in some organisations, using AI is still quietly seen as cheating, so people keep it hidden. Third, Superstar - AI is giving some employees a genuine productivity edge they're incentivised to protect rather than share. Fourth, Superiority - the AI tools available on personal accounts are often significantly more capable than what employers have officially approved.

What is the financial cost of shadow work in organisations?

Research by Forrester found that 76% of over 8,000 workers surveyed engage in shadow work - invisible, unofficial tasks that drain employee time and energy outside of their core role - during their working hours, with 59% saying the volume is increasing year-on-year. The estimated global financial cost is over $1.7 trillion annually. AI tools are increasingly what employees reach for to manage this kind of work, and when organisations don't provide them officially, people find their own.

How should organisations respond to shadow AI?

The question for organisational leaders isn't whether shadow AI is happening - it almost certainly is. The question is whether you understand which of the four drivers is operating in your organisation, and whether your response addresses root causes or simply adds another policy layer. Each driver requires a different response: a governance gap needs clarity and communication; a shame dynamic needs cultural change and psychological safety; a competitive gap requires surfacing hidden best practice; a capability gap needs honest assessment of whether official tools are fit for purpose.

What does shadow AI reveal about organisational culture?

Shadow AI is not primarily a technology governance problem - it is a cultural signal. The real crux of the shadow AI problem is not that people are doing something wrong, but that organisations haven't created the conditions that would make it unnecessary. Where shadow AI is driven by shame, it reveals a culture that hasn't built psychological safety around AI learning. Where it is driven by competitive advantage, it reveals an organisation with hidden performance inequality. In each case, the shadow AI is a symptom, and the underlying cause is human.